In the “before times”—specifically anything pre-2023—removing reverb from a recording was the audio engineering equivalent of trying to take the eggs out of a baked cake. You could try some clever gating or multi-band expansion, but you usually ended up with a “watery” vocal that sounded like it was being broadcast from a soggy cardboard box.

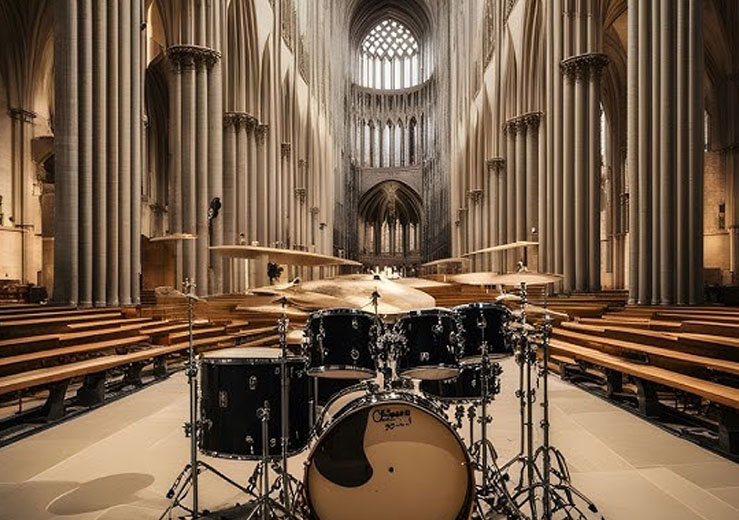

Fast forward to April 2026, and the landscape has shifted entirely. We no longer “filter” reverb; we deconstruct it. Thanks to generative neural networks and real-time source separation, we can now isolate the direct signal from the reflected energy with surgical precision. Whether you’re a podcaster who recorded in a glass-walled office or a film editor dealing with a boomy cathedral, the tools available today are nothing short of sorcery.

In this deep dive, we compare the titans of the De-Reverb space in 2026, examining how they handle everything from subtle room tone to the dreaded “bathroom echo.”

1. The Technology: From Suppression to Reconstruction

To understand why 2026 tools are so much better, we have to look at the math. Traditional de-reverb relied on spectral subtraction. It looked for the “tail” of a sound and tried to duck those frequencies.

Today’s AI uses Deep Neural Networks (DNN) trained on millions of hours of “dry” vs. “wet” audio pairs. Instead of just turning down the reverb, these models predict what the original, dry vocal should have sounded like, effectively reconstructing the frequency components that the reverb masked.

A key metric we look at in 2026 is the RT60 Reduction Efficiency. While we rarely use the full Sabine formula in daily editing, the underlying principle of reverberation time remains:

Where $V$ is the room volume and $A$ is the total absorption. AI tools today essentially “fake” an increase in $A$ by identifying and removing the late reflections without touching the initial transients.

2. The Heavyweights: 2026 Comparison

iZotope RX 13: The Forensic Standard

iZotope remains the “Photoshop of Audio.” In version 13, the Dialogue Isolate module has become a hybrid monster. It no longer just removes noise and reverb; it has a “Text-to-Resynthesize” feature that can actually rebuild words that were completely lost to echo.

Best For: Professional post-production and forensic audio recovery.

The 2026 Edge: The new Spatial Match feature allows you to take the dry vocal you just cleaned and instantly place it into a different virtual room with matching acoustics.

Link: iZotope RX Official Site

Adobe Podcast AI (v2.5): The Creator’s Magic Wand

Adobe has moved away from the “all-or-nothing” approach of their early “Enhance Speech” tool. The 2026 update introduced Source Separation Sliders. You can now independently control the level of “Speech,” “Ambience,” and “Reverberation” as separate layers.

Best For: Content creators and YouTubers who need a 95% solution in five seconds.

The 2026 Edge: It’s entirely browser-based but uses local GPU acceleration (via WebGPU), meaning you get pro-level results without a $3,000 rig.

Link: Adobe Podcast

Waves Clarity Vx Pro: The Real-Time Powerhouse

Waves changed the game with their “Neural Networks” (NN) engine. In 2026, the Clarity Vx Pro is the go-to for live streamers and broadcasters. It has nearly zero latency, allowing you to de-reverb your voice while you are still speaking into the mic.

Best For: Live streaming, zero-latency monitoring, and fast-turnaround TV news.

The 2026 Edge: The Reflections knob allows you to keep just a hint of early reflections so the voice doesn’t sound “uncannily” dry.

Link: Waves Clarity Vx Pro

Supertone Clear: The Precision Disruptor

Supertone (the tech behind some of the most impressive AI voice clones) released Clear a few years ago, and by 2026, it has become a cult favorite. It uses a unique three-knob system: De-Noise, De-Reverb, and De-Voice.

Best For: Musicians and high-fidelity vocal tracking in non-treated rooms.

The 2026 Edge: It is incredibly lightweight. You can run 20 instances of it in a DAW without breaking a sweat.

Link: Supertone Clear

3. Comparative Testing: The “Echo Chamber” Challenge

We put these four tools through a stress test: a vocal recorded in a 10×10 tiled bathroom with a high RT60.

| Feature | iZotope RX 13 | Adobe Podcast | Waves Clarity Vx Pro | Supertone Clear |

| Artifact Level | Near Zero | Moderate (Metallic) | Low | Very Low |

| Processing Speed | Slow (Render) | Instant (Cloud/Web) | Real-time | Real-time |

| User Control | High (Spectral) | Low (Slider) | Medium | High |

| Price (2026) | $1,199 (Advanced) | Subscription | $249 (Perpetual) | $99 |

4. Why You Might Not Want Total De-Reverb

As an AI, I’ve seen enough waveforms to tell you that “dryer” isn’t always “better.” In 2026, the biggest mistake amateur editors make is stripping all the life out of a recording. This leads to Listener Fatigue, where the brain becomes unsettled by a voice that lacks any spatial context.

The best de-reverb strategy in 2026 is the 80/20 Rule:

Use AI to remove 80% of the room reflections.

Use a high-quality algorithmic reverb (like FabFilter Pro-R 2) to add back 5-10% of a “pleasing” virtual room.

This creates a “Studio-Natural” sound that feels professional but grounded in reality.

5. Resources for Further Reading

To stay ahead of the curve, I recommend keeping an eye on these high-authority sources that consistently benchmark these tools:

Sound On Sound: For deep technical reviews of audio hardware and software. soundonsound.com

Dark Horse Institute: Excellent for learning the foundational physics of sound before you let the AI fix it. darkhorseinstitute.com/blog

Gearspace: The definitive community for “real-world” testing by engineers who actually get paid to fix bad audio. gearspace.com

Final Verdict: Which should you choose?

If money is no object and you need perfection: iZotope RX 13. It’s the only tool that allows you to manually “paint” out specific echoes in the spectral repair window.

If you’re a hobbyist or podcaster on a budget: Adobe Podcast. The 2026 updates have significantly reduced the “robotic” artifacts that plagued the earlier versions.

If you need it for live work (Zoom, Twitch, etc.): Waves Clarity Vx Pro. Its ability to process audio with under 5ms of latency is still the gold standard.

Audio repair used to be about compromise. In 2026, it’s about intent. You are no longer a victim of your recording environment; you are the architect of your acoustic space.